Martin: I read and discussed my colleague Jon’s notes for a recent presentation he did and asked him to write them up to share them further and I am delighted to say he agreed to share them here. Jon’s provocations below are framed from a position of considerable learning tech expertise and experience and in my view offer a really thoughtful challenge to dominant narratives related to AI. As you may know, I love a good prod at the hornets’ nest and Jon asks some really fundamental questions that, like the technologies we all find ourselves confronted with, are themselves disruptive.

I do find myself sightly uncomfortable about my role in relation to AI. As a learning technologist, I am frequently asked to train people in how to use it, and to enthuse about all its possibilities. I am expected to generate engagement from academic staff – and to assure them that ‘AI is essential for Industry 5.0’ (or wherever we are up to now). Now I’m not suggesting this is wrong. But I am uncomfortable about the lack of criticality – and lack of precision – underpinning our reasons for using it. I rarely get asked to address the issue of why we should be using it. Industry 5.0 is often given as the reason, but this concept itself appears to be rather vague guesswork about what the future might look like. And the concept of AI is itself amorphous: Constantly changing, and encompassing an impossibly broad set of ideas, systems, processes, functions and platforms. To say ‘we must promote AI’ to equip students for ‘Industry 5.0’ is to say we must promote something undefined, to equip students for something unknown. So I end up feeling like an attendant standing in front of a slide at a water park enthusiastically encouraging people to dive into the tunnel, promising them how much fun it will be. When really, I have no idea where the tunnel leads. And nobody else seems to know either.

I’m not even convinced it’s a tunnel. So in this post, I want to suggest 2 things.

- There are at present two dominant narratives around AI – Neither of which properly address what people want from it or why we should be using it.

- These narratives appear to be perpetuated within Higher Education.

Based on these suggestions, I want to ask a question:

Can we imagine a different narrative? If so, how? And would it be helpful?

So are we ready? Then we can begin…

This is the first narrative – I call this the ‘hey, look at what you can do!’ narrative. This narrative focuses on all the cool stuff you can do with AI. You know the kind of thing:

Hey look! – you can use AI to generate your own clipart, cute avatars or biologically improbably photos!

You can get AI to predict your email responses, organise your to-do list, and generate your own cartoon characters!

I have been suitably impressed by people who have sent me examples of how they have created AI videos of themselves conducting law lectures while riding horses in the wild west! All very cool. Of course, none of these were things you actually asked for, or thought you needed. And most of the time you don’t really understand why you’re doing it. And yet you feel that somehow you have to. We can feel an imperative to use them – because if we don’t we run the risk of being an outsider. Disadvantaged – or something similar. You can see this in adverts for platforms like ClickUp, Monday.com or Grammarly. The suggestion that you should be using AI to write proper sentences, organise your time and manage your projects – regardless of whether you feel you need support in any of those areas. And of course, if you are NOT using AI – then you are inevitably going to fall behind everyone else.

These tap into a feeling that is very much present when we think about AI. Are you willing to risk being the only one left can’t use it? The person still trying to fax their job applications to potential employers? AI is the simply the way things will have to be done in the future – so get on board with all the cool things AI can do, or be left at the station.

Alternatively, in the second narrative we hear about all the terrifying stuff AI is going to do to you, whether you want it to or not. I call this the ‘AI controls your future’ narrative. You know the kind of thing. AI is going to take over our jobs, make all our decisions for us, write all our songs and presumably, eventually conclude we are surplus to requirements and stick us in small tubes to function as a power source. A lot of films have been based on this narrative – so it’s a good one. People like it.

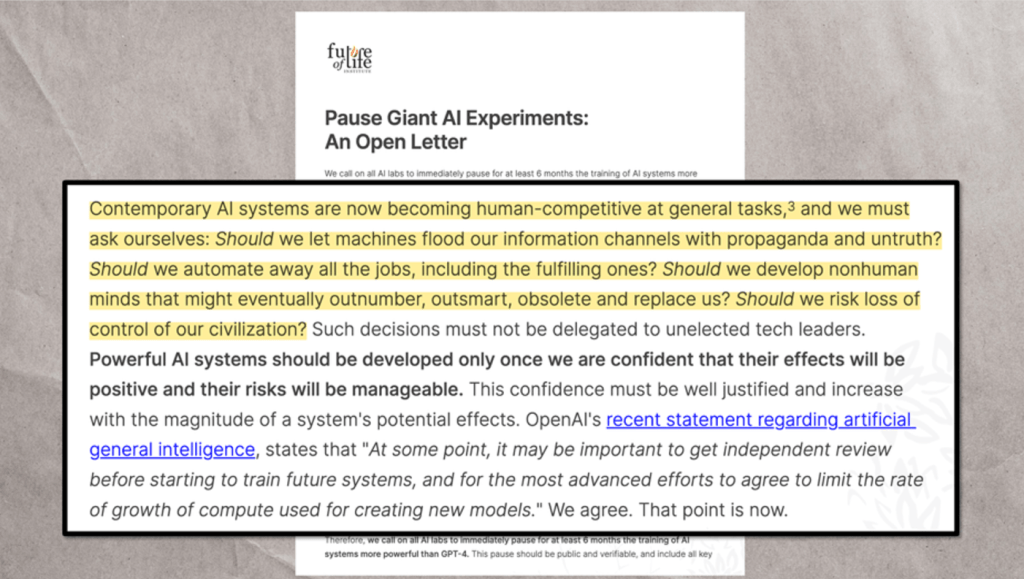

This is the narrative behind those headlines that imply that AI will control the future. It is a dystopian vision – where AI ends up either saving humanity or destroying it. This narrative is reflected in the now-famous open letter sent by the Future of Life Institute in 2023 to ChatGPT:

Highlighted text reads: “Contemporary AI systems are now becoming human-competitive at general tasks, and we must ask ourselves: Should we let machines flood our information channels with propaganda and untruth? Should we automate away all the jobs, including the fulfilling ones? Should we develop nonhuman minds that might eventually outnumber, outsmart, obsolete and replace us? Should we risk loss of control of our civilization?”

According to this narrative, civilization is at a tipping point, and it is humanity itself that is at risk. But even as we ponder the fact that Elon Musk was a signatory to this letter, we sense that there is an inevitability to all this. Mustafa Suleyman, one of the key figures in the development of gen AI, describes it as a wave of technology – that cannot be “uninvented or blocked indefinitely” and that “leads humanity toward either catastrophic or dystopian outcomes”. Underpinning this narrative is anxiety and a sense of powerlessness. Anxiety about the impact of AI, and a worry that there is absolutely nothing we can do about it. That we are in the last days of humanity – the last days where things like community, compassion, fallibility, imagination have value.

So – those are the two narratives. AI represents either cool toys that you have to learn to use if you want to stay relevant. Or it a threat to humanity itself. It is what you can do, or what you must do. What it isn’t, is something you want to do. Now you may already be ahead of me with this. In the whole debate around AI in Universities, we largely seem to be perpetuating these two dominant narratives. We appear increasingly desperate to find some – any way of incorporating Gen AI into our modules, partly because – hey look, isn’t it cool? This might engage my students or make them interested, or impress my external examiner. And partly because of the feeling we will be failing them somehow, if we don’t immerse them in AI as soon as possible, because if our students are not able to use AI then how will they get a job? So we try teaching them how to use Copilot to plan their assignments, how to use Claude to develop code, or how to use Gemini to teach them new software. Of course AI isn’t necessary for any of these tasks – but if our students can’t use this kind of tool, they might be left behind.

That’s the first narrative.

At the same time Universities are immersed in the dystopian narratives in which AI poses an existential threat to the values of education. A good example is the issues of academic integrity in the age of AI. The feeling that AI poses a threat to the integrity of education – to authenticity and ethics. I’ve lost count of the amount of times I have been told how urgent it is that we get AI detecting software for written assignments. Or how often I have hear people talk about how important it is to develop assessments where AI simply can’t be used.

That’s the second narrative. The one in which AI is a cataclysmic threat that needs to be countered.

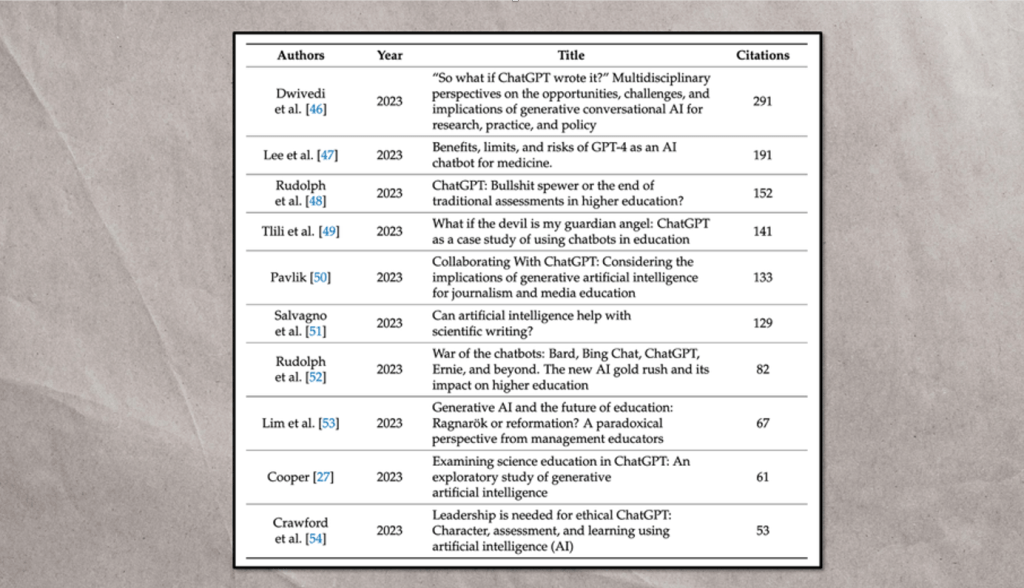

Interestingly, we can see these narratives in systematic reviews listing the most-cited scholarly articles about the use of AI in higher education. Have a look at the titles below and see if we you can spot these narratives at work…

Of course there are no right or wrong answers to this – but here are some thoughts:

- The Dwivedi source implies that generative AI is creative significant challenges for ‘research, practice and policy’ – it is destabilising the existing status quo, and demanding that we re-think practice to accommodate it. This is the second narrative.

- The Lee source is coloured by the first narrative – ‘look at these cool AI chatbots!’ The actual study relates more to the need for individualised learning for students, but the title shapes this discussion into one in which the focus is the AI tool.

- The Rudolph source builds the second narrative explicitly into it’s title – referring directly to the idea that Gen AI spells ‘the end of traditional assessment in higher education’.

- The Tlili source again leans into the second narrative, implying that Gen AI is either a force of extreme evil, or a force of extreme good. Either way, it is something that significantly determines our choices.

- The Pavlik title reflects the second narrative: It describes the tool but suggesting that we ‘collaborate’ with it. This suggests that Gen AI is not a tool used to serve our needs, but something that has equal status with human practitioners. But this extends further to suggest this collaboration is unavoidable: The title does not suggest that this collaboration is a choice to be made, but a reality to confront. And if we have no choice but to collaborate, doesn’t that imply we are not of equal status?

I could go on, but I’m sure you get the point. We can see these narratives in student surveys as well. In 2024, the Digital Education Council released its report on student expectations about AI – titled ‘What Students Want’. Among their findings, they found that 86% of students surveyed “claim to use AI in their studies”. The most recent study from HEPI shows this to have risen now to 95% in 2026. Mostly they are using it to research for information and fix their grammar – but over the last few years there have been increasing signs of students using AI more as a kind of digital tutor: Explaining concepts and providing feedback. But the interesting bit is when you look for evidence of what is driving them.

Because the DEC survey showed 52% of students actually think AI negatively impacts their academic performance. In their 2026 report, HEPI showed that 51% of students think AI negatively impacts their student experience. An earlier HEPI survey showed 82% of students wanting to use AI less. So why are they using it? Well, according to the 2026 HEPI survey, 68% of students believe “it is essential to understand and be able to use AI effectively”. The JISC survey similarly found that students were “concerned about acquiring the necessary generative AI skills for future workplaces”. This is the first narrative. Gen AI is a cool new toy that everyone in using, and there is almost a FOMO (‘Fear of Missing Out’) attitude towards it. Notice that the emphasis is that Gen AI is necessary for future workplaces – not that Gen AI improves those workplaces. But there is anxiety too. Again in the 2026 survey, 65% of students express fear that AI makes learning less valuable.

A Higher Education for Good survey found many students expressing the worry that AI will “render them incapable of functioning without it”, fearing the “dehumanization of education”. The same report highlights concerns about “the lack of humanity in A.I.” and that “A.I. in education could lead to ubiquitous surveillance of students”. In all the surveys there is a common thread in which students express implicit or explicit fears about how AI threatens the very humanity of educational communities. They demonstrate fears of dehumanization, a distrust of AI, and the feeling that AI will never be able to provide the same value as human production.

This is the second narrative.

And we are still no closer to understanding what people actually want. Back in February, I conducted a survey and asked people ‘what do you wish AI could do for you?’ The results were interesting. Staff wanted AI to ease the burden of marking for them. Students wanted AI to help them with time management. And with tidying. Both groups picked something they found frustrating or difficult and said this was what they wanted AI to help them with. It is significant that staff did not suggest they wanted AI to create their teaching resources for them or write their lectures for them – although at many events like this, that is what they are being shown they can do. And it is significant that students did not suggest they wanted AI to write their essays for them –perhaps because they were worried about admitting it. But at the same time they didn’t want AI to handle their childcare. Or do their cooking. Because some things are difficult, yes. And time-consuming, yes. But they are also fulfilling on a human level – and as the open letter to AI said, we don’t want AI to take over those very human things. If AI is going to do things for us – let it be the mind-numbing, soul-destroying stuff that makes us feel less human. So, what are those things? Not ‘what can AI already do for us’, or ‘what we must learn to do with AI’. But what – in an ideal world – would we actually want AI to do for us?

Or let me put it another way: In the words of Neil Postman…

“What is the problem to which this technology is the solution?”

Think specifically about yourselves as educators – and your students. And try and avoid falling into the two dominant narratives.

Bibliography:

Attewell, S. (no date) How will generative AI affect students and employment?, Luminate. Available at: https://luminate.prospects.ac.uk/how-will-generative-ai-affect-students-and-employment (Accessed: 28 May 2025).

Batista, J., Mesquita, A. and Carnaz, G. (2024) ‘Generative AI and Higher Education: Trends, Challenges, and Future Directions from a Systematic Literature Review.’, Information (2078-2489), 15(11), p. 676. Available at: https://doi.org/10.3390/info15110676.

Brynjolfsson, E., Li, D. and Raymond, L. (2025) ‘Generative AI at Work*’, The Quarterly Journal of Economics, 140(2), pp. 889–942. Available at: https://doi.org/10.1093/qje/qjae044.

Chan, C.K.Y. (2024) Generative AI in Higher Education; The ChatGPT Effect. London: Routledge.

Digital Education Council Global AI Student Survey 2024 (2024). Digital Education Council. Available at: https://www.digitaleducationcouncil.com/post/digital-education-council-global-ai-student-survey-2024.

Freeman, J. (no date) ‘Student Generative AI Survey 2025’.

Gebru, T. et al. (2024) Statement from the listed authors of Stochastic Parrots on the “AI pause” letter, Dair Institute. Available at: https://www.dair-institute.org/blog/letter-statement-March2023/ (Accessed: 28 May 2025).

Gulati, P. et al. (2025) ‘Generative AI Adoption and Higher Order Skills’. arXiv. Available at: https://doi.org/10.48550/arXiv.2503.09212.

Hashem, R. et al. (2024) ‘AI to the rescue: Exploring the potential of ChatGPT as a teacher ally for workload relief and burnout prevention’, Research and Practice in Technology Enhanced Learning, 19, pp. 023–023. Available at: https://doi.org/10.58459/rptel.2024.19023.

Jacobides, M.G. and Ma, M.D. (2024) ‘IoD | London Business School Policy Paper – Assessing the expected impact of Generative AI on the UK competitive landscape’.

Laura, RonaldS. and Chapman, A. (2009) ‘The technologisation of education: philosophical reflections on being too plugged in.’, International Journal of Children’s Spirituality, 14(3), pp. 289–298. Available at: https://doi.org/10.1080/13644360903086554.

Leaver, T. and and Srdarov, S. (2025) ‘Generative AI and children’s digital futures: New research challenges’, Journal of Children and Media, 19(1), pp. 65–70. Available at: https://doi.org/10.1080/17482798.2024.2438679.

Ogunleye, B. et al. (2024) ‘A Systematic Review of Generative AI for Teaching and Learning Practice’, Education Sciences, 14(6), p. 636. Available at: https://doi.org/10.3390/educsci14060636.

Pang, W. and Wei, Z. (2025) ‘Shaping the Future of Higher Education: A Technology Usage Study on Generative AI Innovations.’, Information (2078-2489), 16(2), p. 95. Available at: https://doi.org/10.3390/info16020095.

Postman, N. (1999) Building a Bridge to the 18th Century: How the Past Can Improve Our Future. New York: Vintage Books.

‘Student Generative Artificial Intelligence Survey 2026’ (2026) HEPI, 12 March. Available at: https://www.hepi.ac.uk/reports/student-generative-ai-survey-2026/ (Accessed: 26 March 2026).

Student perceptions of generative AI report (2024). JISC. Available at: https://www.jisc.ac.uk/reports/student-perceptions-of-generative-ai.

Thomson, H. (2025) ‘“Don’t ask what AI can do for us, ask what it is doing to us”: are ChatGPT and co harming human intelligence?’, The Guardian, 19 April. Available at: https://www.theguardian.com/technology/2025/apr/19/dont-ask-what-ai-can-do-for-us-ask-what-it-is-doing-to-us-are-chatgpt-and-co-harming-human-intelligence (Accessed: 1 May 2025).

Wei, X. et al. (2025) ‘The effects of generative AI on collaborative problem-solving and team creativity performance in digital story creation: an experimental study.’, International Journal of Educational Technology in Higher Education, 22(1), pp. 1–27. Available at: https://doi.org/10.1186/s41239-025-00526-0.

Youth Talks on AI (2024). Switzerland: Higher Education for Good. Available at: https://youth-talks.org/wp-content/uploads/2024/06/Youth-Talks-on-AI-Final-report-03062024.pdf.

Yusuf, A., Pervin, N. and Román-González, M. (2024) ‘Generative AI and the future of higher education: a threat to academic integrity or reformation? Evidence from multicultural perspectives.’, International Journal of Educational Technology in Higher Education, 21(1), pp. 1–29. Available at: https://doi.org/10.1186/s41239-024-00453-6.