Yesterday, via CODE, I had the pleasure of working with two groups studying for the online PGCert LTHE at University of London. I repaced ‘Speaker 1’ with my name in the AI generated transcript, ran it through (sensible colleague) Claude AI to generate this summary and then asked (much weirder colleague) ChatGPT to illustrate it. I particularly like the ‘Ethical Ai Ai!’ in the second one but generally would not use either image: I am not a fan of this sort of representation. Alt text are verbatim from chatGPT too.

I forgot to request UK spelling conventions but I’m reasonably happy with the tone. The content feels less passionate and more balanced than I think I am in real life but, realisitically, this follow up post would not exist at all if I’d had to have typed it up myself.

Introduction

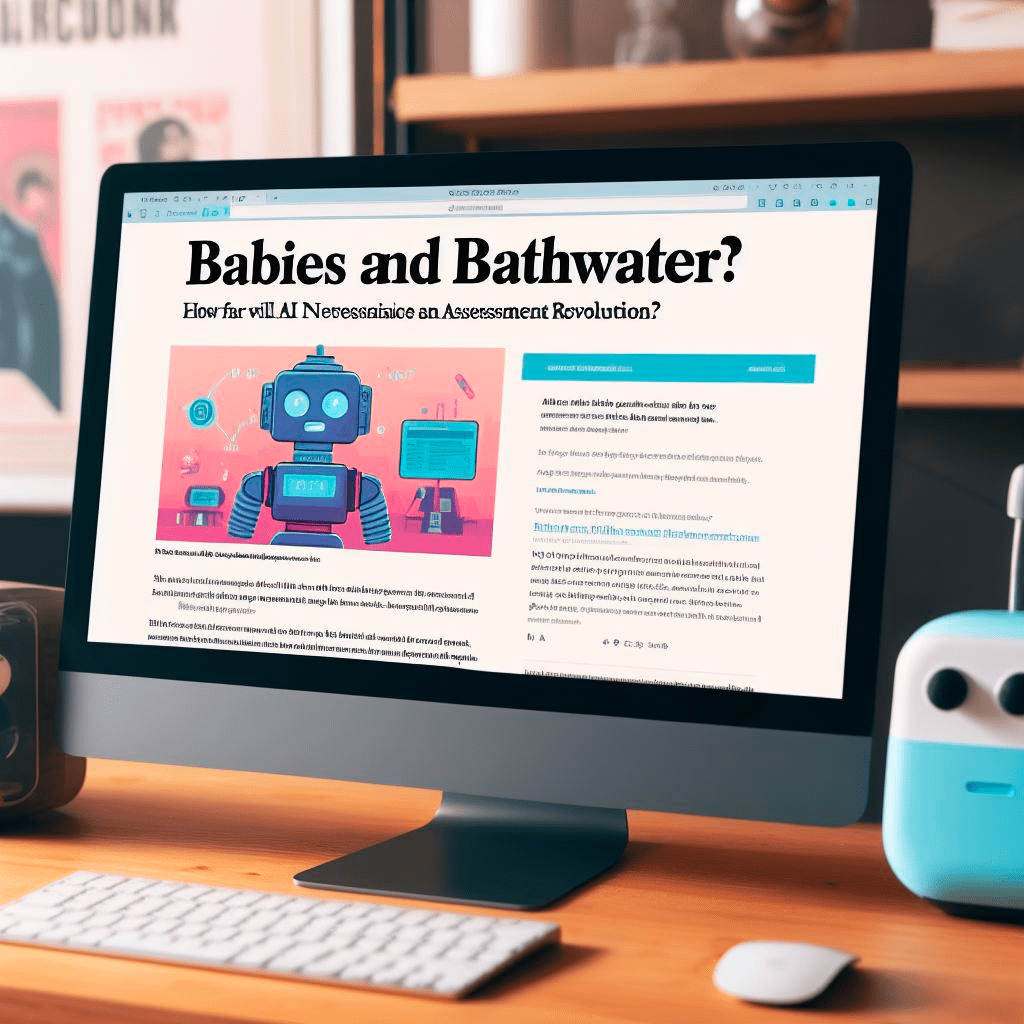

Recent advances in artificial intelligence (AI) are raising profound questions for me as an educator. New generative AI tools like ChatGPT can create convincing text, images, and other media on demand. While this technology holds great promise, it also poses challenges regarding academic integrity, student learning, and the nature of assessment. In a lively discussion with teachers recently, I shared my insights on navigating this complex terrain.

The Allure and Risks of Generative AI

I acknowledge the remarkable capabilities of tools like ChatGPT. In minutes, these AIs can generate personalized lessons, multiple choice quizzes, presentation slides and more tailored to specific educational contexts. Teachers and students alike are understandably drawn to technologies that can enhance learning and make routine tasks easier. However, I also caution that generative AI carries risks. If over-relied on, it can undermine academic integrity, entrench biases, and deprive students of opportunities to develop skills. As I argue, banning these tools outright is unrealistic, but educators must remain vigilant about their appropriate usage. The solution resides in open communication, modifying assessments, and focusing more on process than rote products.

Rethinking Assessment in the AI Era

For me, the rise of generative AI necessitates rethinking traditional assessment methods. With tools that can rapidly generate convincing text and other media, essays and exams are highly susceptible to academic dishonesty. However, I suggest assessment strategies like oral defenses, process-focused assignments, and interactive group work hold more integrity. Additionally, I propose reconsidering the primacy placed on summative grading over formative feedback. Removing or deemphasizing grades on early assignments could encourage intellectual risk-taking. Overall, rather than intensifying surveillance, I argue educators should seize this moment to make assessment more dynamic, dialogic and better aligned with course objectives.

Leveraging AI to Enhance Learning

While acknowledging the risks, I also see generative AI as holding great potential for enhancing my teaching and student learning. I demonstrated how these tools can aid personalized learning via customized study materials and tutoring interactions. They can also streamline time-consuming academic tasks like note-taking, literature reviews and citation formatting. Further, I showed how AI could facilitate more personalized feedback for students at scale. Technologies cannot wholly replace human judgment and mentoring relationships, but they can expand educator capacities. However, to gain the full benefits, both educators and students will need guidance on judiciously leveraging these powerful tools.

Problematizing Generative AI and Bias

One major concern I surface is how biases embedded in training data get reproduced in generative AI outputs. For instance, visual AI tools typically depict professors as predominantly white males. However, I note programmers are already working to address this issue by introducing checks against bias and nudging AIs to produce more diverse output. While acknowledging these efforts, I also caution about potential superficiality if underlying data sources and assumptions go unexamined. Pushing back on biases in AI requires grappling with systemic inequities still present in society and institutions. There are no quick technical fixes for complex socio-historical forces, but civil rights and ethics must be central considerations moving forward.

Navigating Uncharted Waters

In conclusion, I characterize this as a watershed moment full of possibility, but also peril. Preparing myself and fellow educators and students to navigate this terrain will require open, flexible mindsets and reexamining some core assumptions. Knee-jerk reactions could compound risks, while inaction leaves us vulnerable. Addressing challenges presented by AI demands engaging ethical perspectives, but also pedagogical creativity. By orienting toward learning processes over products and developing more robust assessments, educators can cultivate academic integrity and the all-important critical thinking skills needed in an AI-driven world. This will necessitate experimentation, ongoing dialogue and reconsidering conventional practices. While daunting, I ultimately express confidence that with care, wisdom and humanity, educators can guide students through the promise and complexity of this new frontier.