Over a year ago now, in an article for the AI in Education course, Trevor Baxter and I explored what was then still an emerging shift in the way generative AI was being used in HE: from general-purpose large language models towards greater visibility and awareness of tools that could be customised, grounded and shaped by users. The process is still ongoing and slow and there is, I think, a disparity in both awareness and use between students and their teachers (i.e teachers are less aware and doing it less). At the time, much of the public conversation around AI focused on generic chatbot interactions. Early adopters and compulsive fiddlers like me and a bunch of people I have got to know these last few years from a range of institutions had been fiddling around with Custom GPTs in ChatGPT, Projects in Claude, and on its release in the UK, I think towards end of 2024, Google’s NotebookLM, which changed the user interface and pushed RAG to the forefront of capability awareness. Domain-specific AI assistants grounded in selected documents, tailored instructions and contextual memory addressed to an extent hallucination and trust issues which meant that if you were able to push the many many other issues to one side (!) (and increasingly it seems that students are definitely finding a way to do this), it completely changed the previously typical ‘short prompt in; long bit of superficial but supremely self-assured content out’ interaction. Put even more simply- generic chats are often poor quality but using (no code, non specialist) techniques to ‘ground’ the AI can lead to much improved outcomes. In that article, we discussed how these developments might address some of the persistent frustrations surrounding AI systems beyond hallucinations and generic outputs arguing that this might be the trigger towards more effective use in educational workflows.

At the end of the article, we asked participants three broad questions:

- What are your thoughts about the customisation potential of these tools?

- Have you produced or used customised versions of generative AI models?

- If not, what do you think the primary gains might be, and what might we lose if we use them too much or unthinkingly?

The response from the learning community was-and continues to be- really intersting. Across more than 400 comments over the last year or so, participants rarely settled into simplistic ‘AI is amazing’ or ‘AI is terrible’ positions which is good given the narratives of AI that persist in both regular and social media. Instead, the discussion revealed something consistently more nuanced- that I’d probably characterise as cautious (granted also sometimes exuberant) optimism mixed with genuine (granted sometimes staring-eyed) concern. One of the clearest themes was that participants saw grounding and customisation as a major improvement over generic AI systems and my own view is that this does mean we need to ensure that every stakeholder has to have at least a broad understanding of the implications of this. Many course participants described a sense of relief at the possibility of more focused, context-aware outputs:

“It feels less like asking a random stranger on the internet and more like consulting an assistant who has actually read the material.”

Another participant nailed the thing that worries me a lot about how far our students are prepared to go along with AI outputs uncritically:

“The accuracy is exciting, but also dangerous because people may trust it too much precisely because it sounds more grounded.”

No aspect of the discussion generated more visceral reaction than NotebookLM’s podcast feature and while they have introduced more tools since which I think are genuinely valuable in terms of reformatting information, it is still the tool that is most likely to drop jaws of those unfamiliar (even though the default American accent and tone are often cited as reasons to not use it in those outside the US context). Participants used words like “astounding”, “uncanny”, “disturbing”, “impressive”, “surreal” and “frightening”. The realism of the generated dialogue appeared to unsettle people as much as it impressed them. It made me think how we are firmly in an era where the uncanny valley effect has seeped into audio media.

One participant said:

“I forgot after thirty seconds that the voices were artificial.”

But another noted the commonly expressed feeling:

“It sounds polished and convincing, but also oddly empty, like listening to people perform understanding.”

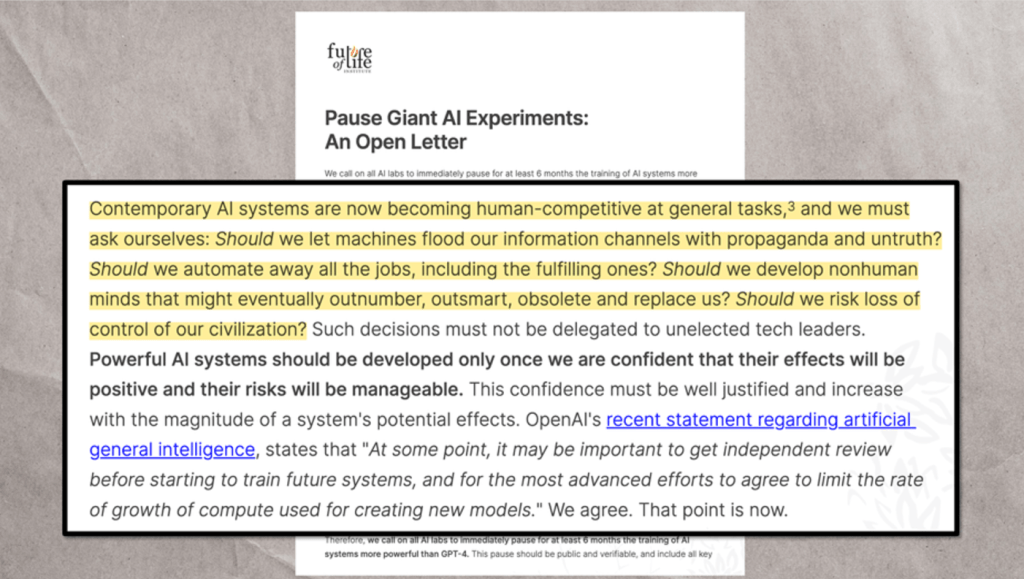

I think that last point is really critical going forward. Many participants were not simply evaluating technical quality because, either explicitly or implicitly, they were addressing issues of trust and deeper connections with content. It’s useful I think to acknowledge, as I did in my previous post reflecting on another forum discussion, that all those contributing had made the decision to follow and actively participate in these discussions- this suggests a strong predisposition to think though I imagine, in open forums, to also be influenced by others in those same threads. Trust was explicitly raised in terms of the implications for the relationships between students and their teachers and, incorrect or misinformation. If synthetic conversations become indistinguishable from human ones, what happens to assumptions around expertise, testimony and authenticity?

Increasingly the discussion revealed educators who had been experimenting with customised AI systems in interesting and quite sophisticated ways: building assessment-support GPTs, lesson-planning assistants, revision tools and discipline-specific tutoring systems. Others discussed using NotebookLM or Claude Projects to synthesise literature, organise ideas or support research coding.

“I uploaded policy documents, module guides and marking criteria into a custom GPT and immediately got more useful outputs than from standard ChatGPT.”

another offered a more cautious perspective:

“It’s brilliant at producing structure and starting points, but weak at nuance and disciplinary judgement.”

Perhaps my tendency to be optimistic is clouding my view here but participants were not by and large seeming to treat AI as a replacement for expertise- though would they admit that if they were? (unlikely I know). Many explicitly framed it as a collaborative or augmentative tool that could remove repetitive labour leaving them, the (presumably!) humans responsible for interpretation, evaluation and decision-making.

Other common gains included:

- faster synthesis of complex material

- support for revision and study

- Change in the way interactions worked- eg clear indication of source of summary output

- increased accessibility

- multilingual support

- reduced administrative workload

- support for neurodivergent learners

- improved contextualisation of AI outputs

“For the first time, I felt AI was pointing me back towards sources rather than away from them.”

“If AI can help with the labour of organisation and sorting, perhaps humans can spend more time actually thinking.”

At the same time, concerns about cognitive offloading cropped up a lot and increasingly so. I maintain that cognitive offloading is not necessarily a bad thing though it connotes that way currently. Writing can be a form of cognitive offloading after all. My most important offload I do at least twice a day (now, frequently, via dictation function in an LLM I have to admit) is the production of to-do lists. Nevertheless, inappropriate cognitive offloading is something we need to spend more time discussing I think. Participants repeatedly questioned what might happen if learners increasingly bypassed difficult but important cognitive processes such as reading, synthesis, uncertainty and reflection.

“The danger is not that students will cheat. It is that they may stop wrestling with ideas.”

“Convenience is seductive. The risk is that we slowly outsource the struggle that learning depends on.”

And it is that seduction that I think could be a useful lens to reflect on our behaviours. One thing I noted was that a lot of folk were feeling (like I have for a long time) that there is another cognitive label we have to confront: cognitive dissonance. Many were excited and uneasy at the same time. They argued that there is genuine potential for accessibility, efficiency (I am still a BIG sceptic about this, noting how I am busier – but likely more productive than ever) and learning support, while also insisting that critical thinking, disciplinary expertise and human judgement must remain central – even though they see patterns of loss of centrality as the treacherous AI terrain is navigated awkwardly and unevenly, often without the expert stewardship (for either teacher or student) that is critical. In the same way, though, that I think we need a nuanced perspective on ‘offloading’ I likewise think we need an increasingly nuanced one in terms of the seductive power of customisation. Seduction to me connotes being led astray but people are and will continue to be seduced by things and a lot of pleasure will undoubtedly follow too. Seduction need not be always negatively connoted- if we are drawn to the Siren call of customisation we need to go into experiementation with our eyes and ears open (so avoid Odysseus’ beeswax solution), be aware of the promise of potential new ways of seeing and doing and learning whilst understanding that there’s a real danger of falling too far under a spell.