I was once told by a fellow English teacher (back in the day) that it was funny I was teaching English. I asked why. ‘Well you’re all ‘wiv’ and ‘froo,” she said, highlighting my non standard accent. After that I meekly tried to speak ‘better’ for ages, especially in company of the other English teachers. It knocked my sideways but over time I fought back in small ways. Not enough though. Conversations and a couple of articles I read on WonkHE this week have given me a chance to think about how AI is offerring leverage to change and a critical lens on what we value in so called academic English.

I have just come from a meeting with Kelly Webb-Davies, whose thinking (nicely exemplified here ‘I wrote this- or did I?’ and here: ‘On the toxicity of assuming writing is thinking’ ) and ongoing work at Oxford I have long found a perfect prod to the hornets’ nest that is my brain. What Kelly consistently does so well, and what came through again very strongly in our discussion, is a refusal to accept binary, nuance-less framing of AI as either an existential threat or panacea to drudgery. Instead, Kelly’s focus in our conversation was towards much harder and more important questions: what do we think writing is for (anywhere but especially in academia where student writing is the means by which they are evaluated not the the thing they are being judged on), why we value it so highly in assessment, and where might our assumptions about writing, cognition and academic legitimacy be misplaced.

Kelly’s work is particularly powerful in contrasting privileged and/or colonial thinking about ‘correct’ and ‘acceptable’ academic writing with translanguaging, our idiolects, cultural and linguistic histories and neurodiversity. Kelly problematises assumptions about writing as a technology that suggest writing is a natural proxy for thinking or even THE way of doing thinking (can you do thinking?). In addition, writing has always evolved alongside other technologies, from inscription to print to word processors, and yet higher education continues to treat a narrow, highly codified form of written English as if it were a timeless measure of intellectual engagement. Thinking manifests in multiple ways, many of which are poorly served by conventional academic writing. AI is able to bridge idiolect to ‘accepted’ language in standard forms (see how I keep using inverted commas?) translating between ways of thinking and the forms the academy currently recognises. This then raises questions about how far we might use AI to compromise in this way or whether we might leverage this truly disruptive phenomenon to challenge yet more of our fundamental beliefs about what is and is not acceptable as well as how we validate learning. Received wisdom may not be that wise at all; a point I tried to make in my previous post. I’m really looking forward to reading and hearing more about Kelly’s ideas a for Voice First Written Assessment (VFWA) which is a really practical and thoughtful approach that values the way people think, speak and write, putting that at the heart of assessments (while enabling valid assessment; everyone’s a winner!)

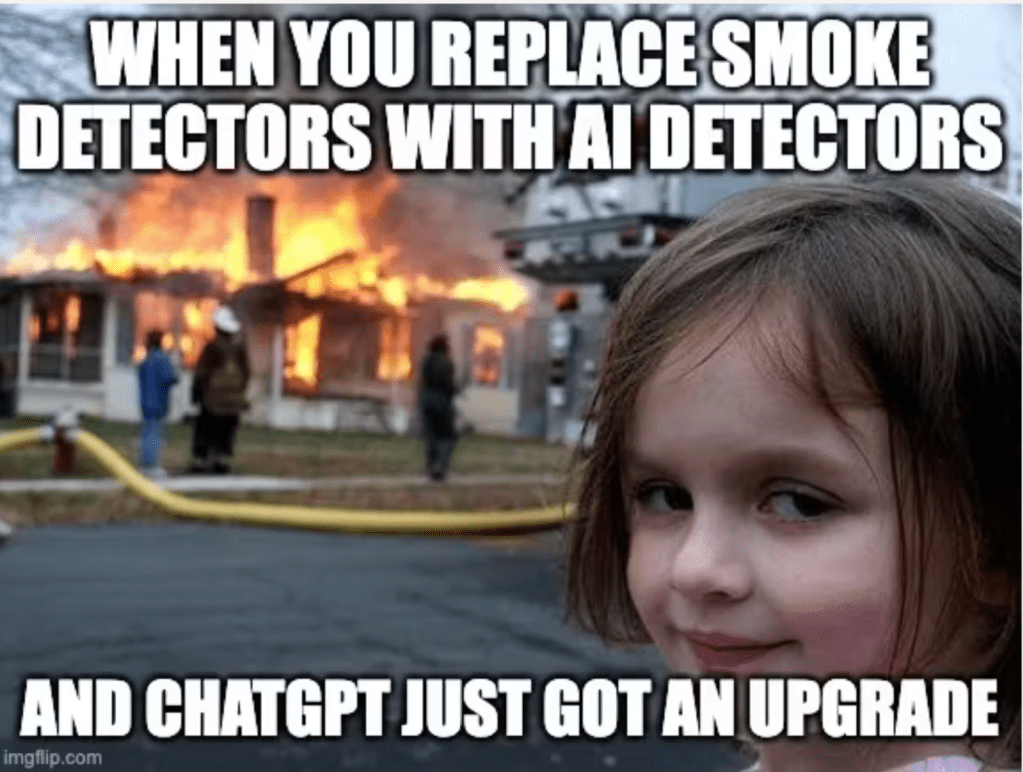

My meeting coincided with publication of a piece from Jim Dickinson earlier this week. Dickinson does not deny the risks of AI, nor does he minimise the evidence that poorly designed uses of AI can undermine learning. The folk (me included) writing or talking about AI often feel obligated to front load caveats and boundaries (I understand this; I don’t want to talk about that… while thinking: ‘please don’t derail this session by insisting we talk about ‘y’) though Dickinson does pretty good job of weaving a LOT of things in! He very helpfully brings together ‘famous’ – perhaps even notorious- studies from a growing body of research that shows, when taken collectively, how uncritical, convenience-driven AI use can hollow out motivation, attention and the owning of ideas and learning itself but can also be a boon to ‘productive struggle’ and valuable scaffold (if used advisedly). What emerged was that the problem is not helpful when framed as AI as this broad, abstract threat. The ongoing research is critical but that shouldn’t legitimise stalling interventions, exploration and confrontation of the harsh realities of the extensive, actual, often unsupported use of AI in research and writing (as production) workflows. The way learning and assessment continue to be designed around immature understandings constrained by tradition and conservatism (my reading, not his words) gives a sense that what we thought we were doing were frail even before ChatGPT. The same tool that produces passivity in one design can deepen judgement and persistence in another. Potential and frailty coexist, and the difference is pedagogical intent, design and scaffolding.

In another piece this week on WonkHE, Rex McKenzie, makes an observation that will I am sure cause debate and consternation but it’s a fundamental one. McKenzie’s comparison between university expectations and journal publishing practices is pretty stark. He shows that while students in many (most? all?) institutions are policed for using AI to shape language, structure and expression, the professional academic world (as manifested in journal policies on AI use) has largely accepted AI-assisted writing, provided accountability and disclosure remain with the human author. This contrast exposes how much of what we currently assess is not intellectual substance but adherence to a particular linguistic performance. Across academic publishing trust appears to be growing on the assumption that if writing is honed it is ok because the research and ideas are owned by the authors. This then raises a really uncomfortable question about permitted AI use (not least in traffic light systems) where ideation is often seen as fine but the use of AI to support writing is taboo. Have we got it arse about face? (I used that phrasing as a less than subtle way to signal that no AI is choosing my metaphors).

Rex McKenzie’s thinking converges strongly with Kelly’s argument. The insistence that “writing is thinking” is not an innocent pedagogical claim; it is historically and culturally situated. It privileges those already fluent in dominant academic registers and marginalises others, whether through class, language background, neurotype or other trait or characteristic. Treating one form of English as the sole legitimate evidence of cognitive engagement risks mistaking conformity for rigour. AI does not create this problem, but (finally!) it makes it harder to ignore. Talking with Kelly and reading these articles and thinking about the ‘problem’ of AI I arrive at what will be for many a really uncomfortable conclusion. It’s been said before but it’s worth saying again through a lens of challenge to the hegemony of standard forms of expression and writing: The AI threat is not primarily about cheating, efficiency or technological disruption. It’s a threat to convention; to conservatism; to tradition; to imagined halcyon times. Let us re-articulate and argue about what we value, what we recognise and what we are willing to redesign. If we continue to treat writing as both the locus and the pre-eminent proof of thinking, we will remain trapped in defensive and incoherent policy positions. If, instead, we take seriously questions of cognitive engagement, judgement and inclusion, then AI catalyses (and can even enable) long-overdue honesty about assessment, pedagogy and realities of how systems continue to marginalise.

Kelly will be speaking at a compassionate assessment event on 5th March – you can also see my contribution to the compassionate assessment resources via QAA pages here.