Since publishing the latest version of ‘AI in Education’ (17K participants to date & content still available free for 3 weeks from the day you enrol!) there have been a few of the discussion forums that have really kept my attention. The post ‘What GenAI tools can do well’ has attracted in just under a year (6/5/25-17/4/26) 282 comments of which around 150 unique contributions/ new posts from course participants. Those participants are mostly educators across all sectors (but also students) and while the majority are in the UK we have had participants from 149 countries.

The post itself illustrated some common uses of Gen AI all those months ago (!) and shared a conclusion that echoes common guidance to academic staff and students about over reliance: “Gen AI can be a powerful assistant, but it cannot and should not be used in an attempt to replace cognitive engagement.” At the end of the post I asked: What are your top personal and/or professional uses of generative AI tools?

The teachers, lecturers and students shared examples of what they were doing, along with anxieties and uncertainties. These comments revealed messy, sometimes confused pictures that reflect anything but consensus. Rather than any sort of transformation either to digital utopia or intellectual ruin there is nevertheless a sense of change over time that suggests increased normalisation and experimentation alongside heightened wariness.

Across the year the use cases expressed can be clustered in common/ moderate and niche use:

The most widespread and repeated uses align pretty well with the recent WONKHE survey and HEPI/ KORTEXT surveys of student patterns of use:

- Improving writing (emails, reports, academic work)

- Lesson planning and teaching materials

- Summarising texts and notes

- Brainstorming ideas

- Structuring and organising thinking

Moderately common uses

- Assessment design (questions, rubrics, feedback)

- Personalised learning materials

- Transcription and meeting support

- Explaining concepts and tutoring

More niche uses

- Generating podcasts or audio learning materials

- Creating chatbots or AI-driven learning tools

- Coding support and technical debugging

- Business planning, financial modelling

- Creative production (music, art, storytelling)

- Using AI as a debate partner or Socratic tool

The emphasis in the earlier responses tends to be more tentative and exploratory with quite a few expressing or arguing for caution and likelihood of detrimental impacts on learning:

From 6th May 2025: “When students or teachers rely on AI to produce summaries, they miss out on the hard but necessary work of developing patience, curiosity and critical judgment… there’s a real danger that students may become fast but superficial, able to produce results without truly understanding them.”

It’s a subtle shift but examples like the above which take a more speculative stance still emerge but more often now I see reflective, experiential posts:

8 January 2026: “I found I was becoming overly reliant on it and formed a habit of asking AI too many questions, which undermined my confidence in my decisions. I think it’s important to set boundaries when it comes to how much we incorporate AI in our professional and personal lives, but it can be incredibly helpful.”

14 February 2026: “Overall, I see these tools as productivity and thinking partners rather than replacements for my own judgment.”:

The framing has shifted slightly from wider framed narratives about threats to the way we do education to more about the necessity to regulate personal use.

The second tendency emerging is away from a commitment to experiment or to find a good tool to use for task X:

6 May 2025: “I am going to explore other avenues…”

10 May 2025: “The question comes in my mind which tool to use… there is always some difference…”

The tendency is towards comments where use is embedded, more normalised and certainly not novel:

10 March 2026: “I use AI to draft formal documents and summary versions… organise documents and disparate pieces of information into a coherent well structured text.”

Over this time the range of tasks definitely expands though the framing of activities in terms of tidying or polishing; unblocking the blank page and helping with stuff that teachers have to do (and often resent as it creeps into evenings and weekends!) still dominates. This apparent acceptance of utility rather than speculation of this as a possibility is just about tangible (note these are the folk who choose to contribute to forums on a course they have chosen to participate in!) and is also reflected in the frequency of reference to AI as an assistant. In the first 6 months ‘assistant’ does appear though ‘partner’ or ‘copilot’ are rare but in the last 6 months references have roughly tripled with much more frequent use and is increasingly a dominant framing with ‘partner’ and ‘copilot’ used with much more frequency.

Another evolution is in how people describe how they work with it which reinforces the ‘assistant’ framing. Early interactions are often loosely specified. More recent posts emphasise the importance of iteration, structured/ well formed prompting and critical judgement of outputs. Also there is a willingness to push back against bland outputs and how source checking -and encouragement of students to engage with that too- are central (even though hallucination as a concept is not actually mentioned).

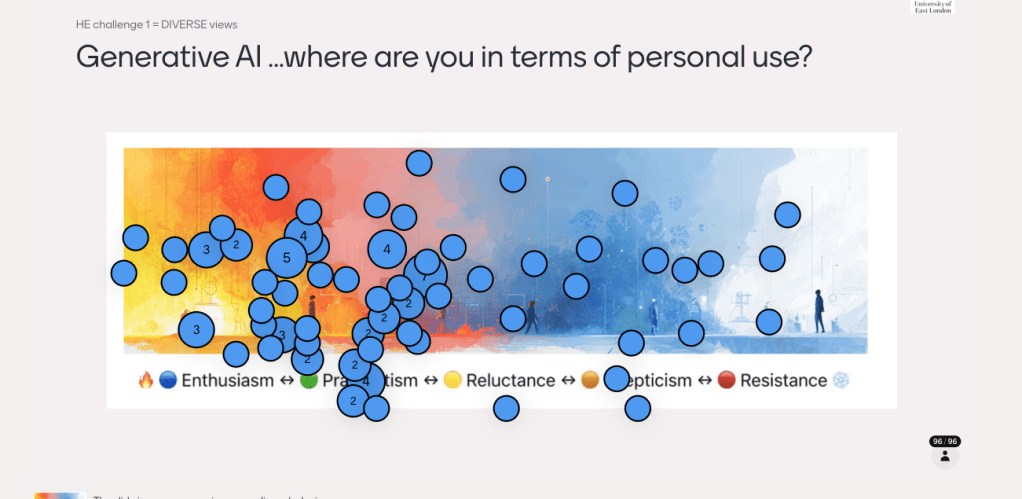

So, if these comments can be taken as even a gentle indicator (and the sense is mirrored at events and with the various polls I conduct in live sessions) then there are gradual shifts in behaviour. This is a slide I have used perhaps half a dozen times in the last 18 months and there’s a definite tendency towards the left (insert your own joke here about the far right!) over that time.

I have no doubt that through the telescoped perspective of our successors some 20 to 30 years in the future this may well look like transformation though as these last 3 years will appear but a blip in the histories of education in any context. Early curiosity tinged so often with tentativeness of the still novel seems to have been supplanted by more routine use. AI experience and pragmatic or reluctant acceptance feeds a willingness to see where and how AI can become integrated into everyday workflows but, reassuringly, with more sophisticated thinking and understanding emergent about risks and limitations that can also be seen in the student use research data.

This is very impressionistic but, my sense is that teacher/ lecturer actual use is about a year behind student use (in terms of percentages). The anxieties about permitted and valid use identified by students in the surveys mentioned above (and despite the pretty remarkable numbers of students using these tools routinely) are what might be seen as the next stage on from normalisation. Funnily enough my daughter (who is 14) argued quite convincingly last night why she will not use generative AI if she can avoid it and how her heart sinks when her teachers use AI videos or images. There’s a vibe out there akin to teachers using rap to engage the kids (note I am wincing on behalf of all teachers who have ever attempted this) and my sense is that we are at a stage now where ‘appropriate use’ needs to go through some turbulent (and maybe embarrassing) stages as the teacher AI use numbers begin to match the student numbers and all parties finally recognise and can distinguish between actual utility, sketchy over reliance and downright sloppy applications.

[…] of trust and deeper connections with content. It’s useful I think to acknowledge, as I did in my previous post reflecting on another forum discussion, that all those contribution had made the decision to follow and actively participate in these […]

LikeLike